AI Integrity in Cyber Defence : Navigating Innovation and Responsibility

Artificial intelligence has become a central component in modern cyber defence strategies, offering advanced capabilities to detect threats, respond to incidents, and manage vulnerabilities. As AI systems grow in sophistication, ethical considerations have moved to the forefront demanding scrutiny of how these technologies are developed and deployed. Ensuring that innovation is balanced with accountability maintaining trust and effectiveness in cyber security.

The integration of Artificial Intelligence (AI) into cyber defence systems has brought about transformative changes in how organisations protect themselves against digital threats. As AI-driven technologies become increasingly sophisticated, they offer enhanced capabilities for threat detection, rapid response, and system resilience. However, the deployment of AI within this domain raises important ethical considerations, such as the need for transparency, accountability, and fairness in automated decision-making processes. Balancing technological innovation with responsible practice is essential to ensure that cyber defence strategies remain both effective and ethically sound.

Snapshot of the Current Landscape:

Recent years have seen a surge in the adoption of AI-driven tools across the cyber defence sector. Organisations are leveraging machine learning for real-time threat detection, automated incident response, and predictive analytics to anticipate future risks. The integration of AI into cyber security operations is accelerating, with industry and government bodies actively exploring its potential. However, this progress is matched by growing debate around ethical governance and responsible use.

Some of the evolving trends while adapting to AI in the current landscape are:

✦ Rapid Adoption: Over 60% of cyber defence organisations in Europe have integrated AI-driven solutions into their security operations as of 2026.

✦ Investment Surge: Global investment in AI-powered cyber security tools reached approximately £15 billion in 2025, marking a 25% year-on-year increase.

✦ Ethical Concerns: 72% of IT professionals cite ethical challenges—such as data privacy, algorithmic bias, and transparency—as major considerations when deploying AI in cyber defence.

✦ Regulatory Developments: The EU’s Artificial Intelligence Act, expected to take effect in late 2026, will require organisations to conduct robust ethical risk assessments of AI systems used in cyber security.

✦ Industry Collaboration: Leading technology firms (e.g., Microsoft, IBM, and Darktrace) have formed cross-sector alliances to establish ethical guidelines and best practices for AI in cyber defence.

AI Threat Detection: AI-powered solutions are now responsible for detecting over 85% of all cyber threats in real time, significantly reducing incident response times.

✦ Talent Shortage: There is an estimated shortfall of 30,000 skilled professionals in ethical AI and cyber defence across the UK and Ireland.

✦ Public Trust: According to recent surveys, only 43% of the public fully trust AI systems to manage sensitive cyber defence tasks, underscoring the importance of transparency and accountability.

✦ Notable Initiatives: The Cyber Ethics Consortium (launched in 2025) brings together industry leaders, academics, and regulators to address emerging ethical challenges in AI deployment.

✦ Future Outlook: Experts predict that ethical AI will become a cornerstone of cyber defence strategies by 2030, with increased emphasis on explainable AI, fairness, and human oversight.

Risks and Challenges:

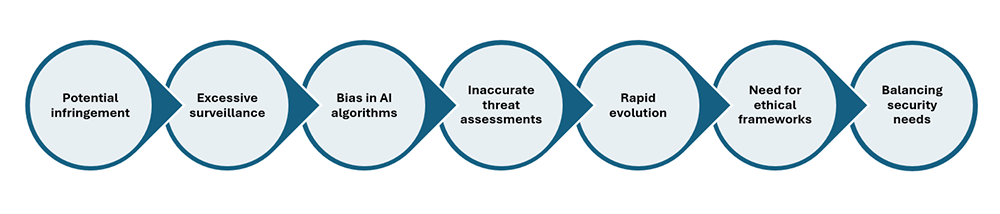

The integration of artificial intelligence (AI) into cyber defence brings forth a host of ethical considerations, risks, and challenges. One primary concern is the potential for AI systems to act autonomously, making decisions that may inadvertently infringe upon privacy or civil liberties. For instance, automated threat detection tools could lead to excessive surveillance or the collection of sensitive personal data without proper oversight.

The integration of artificial intelligence (AI) into cyber defence brings forth a host of ethical considerations, risks, and challenges. One primary concern is the potential for AI systems to act autonomously, making decisions that may inadvertently infringe upon privacy or civil liberties. For instance, automated threat detection tools could lead to excessive surveillance or the collection of sensitive personal data without proper oversight.

Another significant risk involves bias in AI algorithms. If the data used to train these systems is unrepresentative or skewed, the resulting models may discriminate against certain groups or produce inaccurate threat assessments. This raises questions about fairness, accountability, and transparency in cyber defence operations.

There are also challenges related to the rapid evolution of cyber threats. AI can adapt and learn, but adversaries can exploit vulnerabilities in AI-driven defences, leading to an ongoing arms race between attackers and defenders. Ensuring robust ethical frameworks and human like –

✦ Potential infringement on privacy and civil liberties due to autonomous AI decision-making.

✦ Excessive surveillance and collection of sensitive personal data without adequate oversight.

✦ Bias in AI algorithms resulting from unrepresentative or skewed training data.

✦ Discrimination against certain groups and inaccurate threat assessments.

✦ Rapid evolution of cyber threats leading to vulnerabilities in AI-driven defences.

✦ Need for ethical frameworks and human oversight to maintain trust.

✦ Challenges in balancing security needs with ethical principles, requiring thoughtful regulation and dialogue.

Finally, policymakers and practitioners must carefully balance security needs with ethical principles, fostering dialogue and regulation to guide responsible AI development in cyber defence.

To address these challenges, organisations are adopting robust governance structures and ethical frameworks. Best practices include regular audits of AI models, clear documentation of decision-making processes, and adherence to compliance standards. Collaboration between industry, academia, and policy makers is vital to establish guidelines that promote responsible AI use. Training and awareness programmes further support ethical deployment by fostering a culture of accountability.

The integration of artificial intelligence (AI) into cyber defence has introduced significant ethical considerations for organisations and practitioners. As AI systems become more sophisticated in detecting, preventing, and responding to cyber threats, it is essential to ensure their deployment aligns with ethical principles and industry standards.

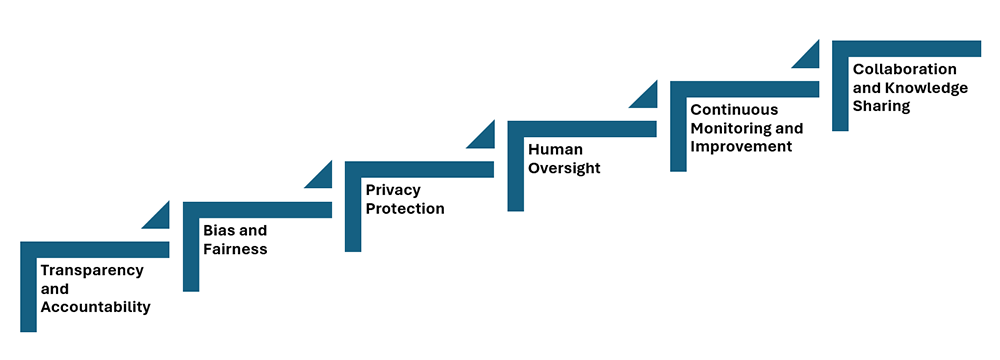

✦ Transparency and Accountability: Organisations must ensure that AI-driven cyber defence tools operate transparently, with clear documentation of decision-making processes. Accountability mechanisms should be in place to address errors or unintended consequences.

✦ Bias and Fairness: To prevent discrimination, it is crucial to regularly audit AI algorithms for bias. Training data should be diverse, and results should be monitored to ensure fair treatment of all users and entities.

✦ Privacy Protection: AI systems in cyber defence often process sensitive data. Adhering to data protection regulations, minimising data collection, and anonymising information where possible are best practices to safeguard individual privacy.

✦ Human Oversight: While AI can automate many aspects of cyber defence, human experts should remain involved in critical decision-making, especially in situations requiring ethical judgement or where the consequences of errors are significant.

✦ Continuous Monitoring and Improvement: Ethical AI deployment is an ongoing process. Regular reviews and updates of AI systems help address evolving threats, technological advances, and emerging ethical challenges.

✦ Collaboration and Knowledge Sharing: Industry collaboration helps establish and maintain best practices. Sharing experiences, challenges, and solutions across organisations fosters a community committed to ethical AI use in cyber defence.

By adhering to these best practices, organisations can leverage AI to enhance their cyber defence capabilities while maintaining public trust and upholding ethical standards.

Balancing Innovation with Accountability:

Responsible AI deployment requires a strategic approach that integrates ethical considerations into every stage of development and operation. Strategies include establishing clear accountability mechanisms, investing in explainable AI, and engaging stakeholders in oversight. By prioritising transparency and fairness, organisations can harness the benefits of AI while safeguarding against ethical pitfalls.

Responsible AI deployment requires a strategic approach that integrates ethical considerations into every stage of development and operation. Strategies include establishing clear accountability mechanisms, investing in explainable AI, and engaging stakeholders in oversight. By prioritising transparency and fairness, organisations can harness the benefits of AI while safeguarding against ethical pitfalls.

The integration of artificial intelligence (AI) into cyber defence strategies is transforming the way organisations and governments protect digital assets. AI-driven systems are capable of rapidly detecting and responding to threats, analysing vast amounts of data, and adapting to evolving cyber risks. However, this technological leap comes with significant ethical considerations that must be addressed to ensure responsible deployment.

One of the primary ethical challenges involves maintaining accountability in automated decision-making. As AI systems become more autonomous, it is crucial to establish clear guidelines regarding who is responsible for actions taken by these technologies. Transparency in algorithmic processes and robust oversight mechanisms are necessary to prevent unintended consequences, such as unjustified surveillance or discrimination.

Furthermore, the use of AI in cyber defence raises questions about privacy and the potential for misuse. While AI can enhance security, it may also infringe upon individual rights if not governed appropriately. Striking a balance between safeguarding digital infrastructures and respecting civil liberties requires ongoing dialogue among stakeholders, including technologists, policymakers, and the public.

To achieve ethical innovation in cyber defence, organisations must prioritise fairness, accountability, and transparency in their AI systems. This involves regular auditing, ethical training for developers, and the implementation of frameworks that guide responsible use. Ultimately, the goal is to harness the benefits of AI while upholding ethical standards that protect both society and the integrity of digital environments.

The future of AI in cyber defence is promising, but it hinges on a steadfast commitment to ethical principles. By following best practices, addressing risks, and promoting responsible innovation, cybersecurity professionals and policy makers can ensure AI technologies protect critical assets without compromising integrity. Continued collaboration and vigilance will be essential as the ethical landscape evolves.

About the Author :

Ms. Kavitha Srinivasulu

CCISO | DPO| DTO| CISA | CRISC | CISM | CGEIT | PCSM | IAPP AIGP | ISO42001: LA

Program Director: Cybersecurity & Data Privacy

Tata Consultancy Services

Ms. Kavitha Srinivasulu is an Award winning Technology Leader.

Ms. Kavitha Srinivasulu is a Senior Cyber Risk and Resilience executive with over 22 years of global leadership experience advising Boards and Executive Committees across Financial Services, Healthcare, Retail, Technology, and regulated industries.

Ms. Kavitha Srinivasulu has delivered and led large-scale, regulator-driven cybersecurity, AI driven, PCI, and SOC transformations for Tier-1 banks, global healthcare organisations, and highly regulated enterprises operating across the UK, EU, USA, APAC, and ANZ.

Ms. Kavitha Srinivasulu is a trusted advisor to Boards, C-suite, regulators, and global enterprises, consistently delivering resilient, compliant, and scalable cyber operating models.

Ms. Kavitha Srinivasulu is an Advisory Member in National Cyber Defence Research Centre (NCDRC)

Ms. Kavitha Srinivasulu is a Board Member of Women in CyberSecurity (WiCyS) India

Ms. Kavitha Srinivasulu is an Executive Committee Member at CyberEdBoard Community

Ms. Kavitha Srinivasulu is an ambassador at ISAC

Ms. Kavitha Srinivasulu is Bestowed with the following Licenses & Certifications :

https://www.linkedin.com/in/ka

https://www.linkedin.com/in/ka

Ms. Kavitha Srinivasulu can be contacted at :

Disclaimer :

“The views and opinions expressed by Ms. Kavitha Srinivasulu in this article are solely her own and do not represent the views of her company or her customers.”

Also read Ms. Kavitha’s earlier article: